Kubernetes Routing

3 min readWe devs all know Kubernetes and its mass usage across the globe in all software products, this blog is not going to be explaning about kubernetes rather this will focus on how typically routing works on kubernetes.

TL;DR: How a request reaches your pod that is inside your K8s cluster?

Some basics of Kubernetes#

Every k8s cluster has set of components called

- Pod: Our actual application lives here inside a container. (lives on worker node)

- Service: Not a code or not a server, its just a plain object that have some routing rules to pod and lives in

etcd.( stored in etcd(master node), executed by worker node kernel) - Ingress: Similar to

service, not a code or server, just a plain object which has rules fromhost to service. (stored in etcd(master node), executed by ingress controller pod on worker node).

Kubernetes cluster usually has two planes(nodes) Master and Worker planes(nodes).

Master Node

- It has components like

etcd(storage),kube-apiserver,scheduler,control-manager. - etcd: KV database.

- kube-apiserver: All internal request goes here, eg: service route tables, kubectl, controllers etc

- scheduler: Decides which

node(worker) should run a pod. - control-manager: Kinda State machine.

- It has components like

Worker Node

- It has

kube-proxy,podsetc. - kube-proxy: handles service to pod traffic from worker node via iptables or ipvs or ebpf.

- pods: our actual application lives inside a container.

- It has

How a request reaches the pod#

There are many ways a request can reach a pod based on the configuration in k8s.

- Via Service

we can reach our application pod from external world(public internet) via

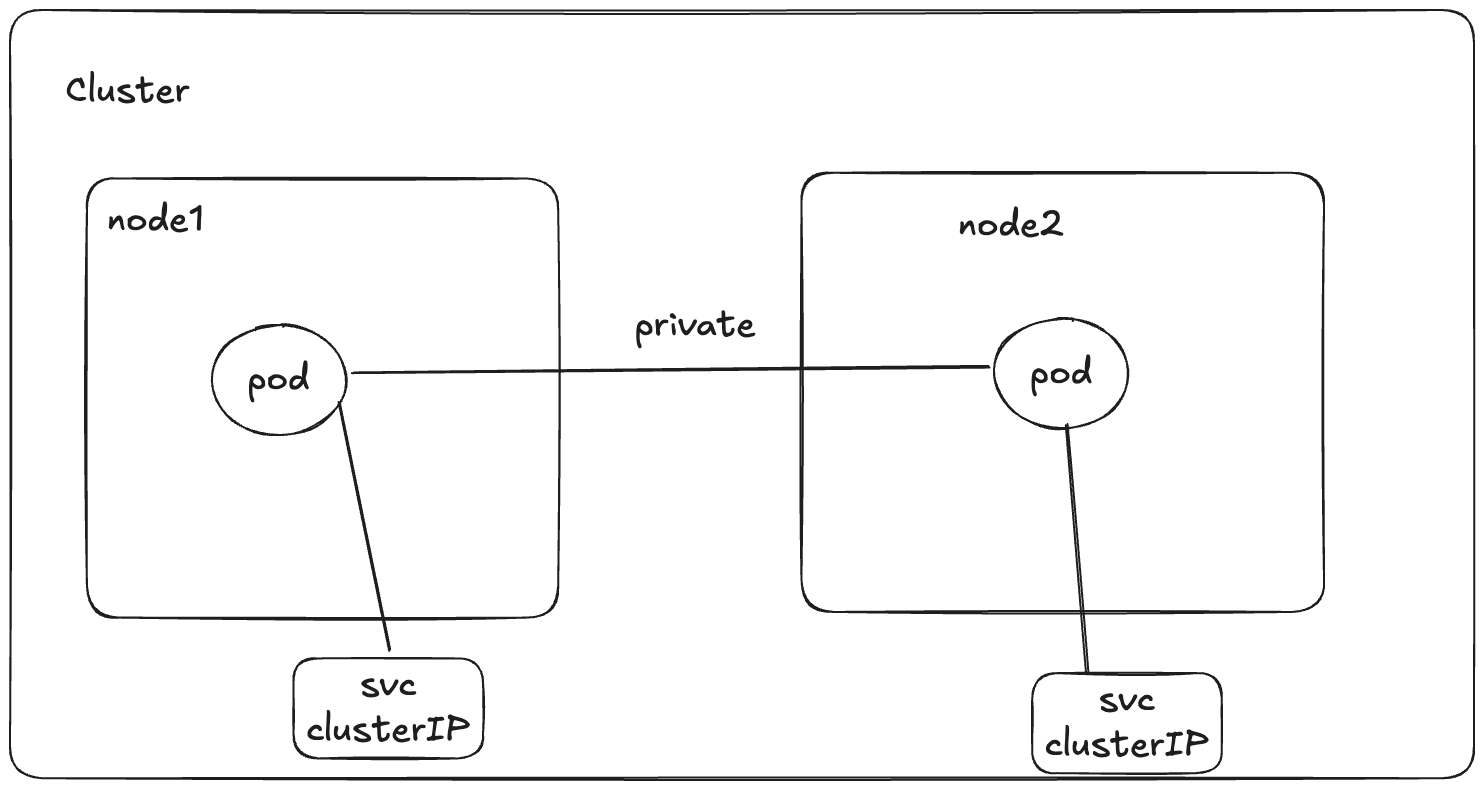

services. There are three kinds of services in k8s- ClusterIP: This type is basically only internal, means all the services from different nodes inside the same cluster

can able to access each other, but

clusterIPservice is not reachable to anyone outside the world.

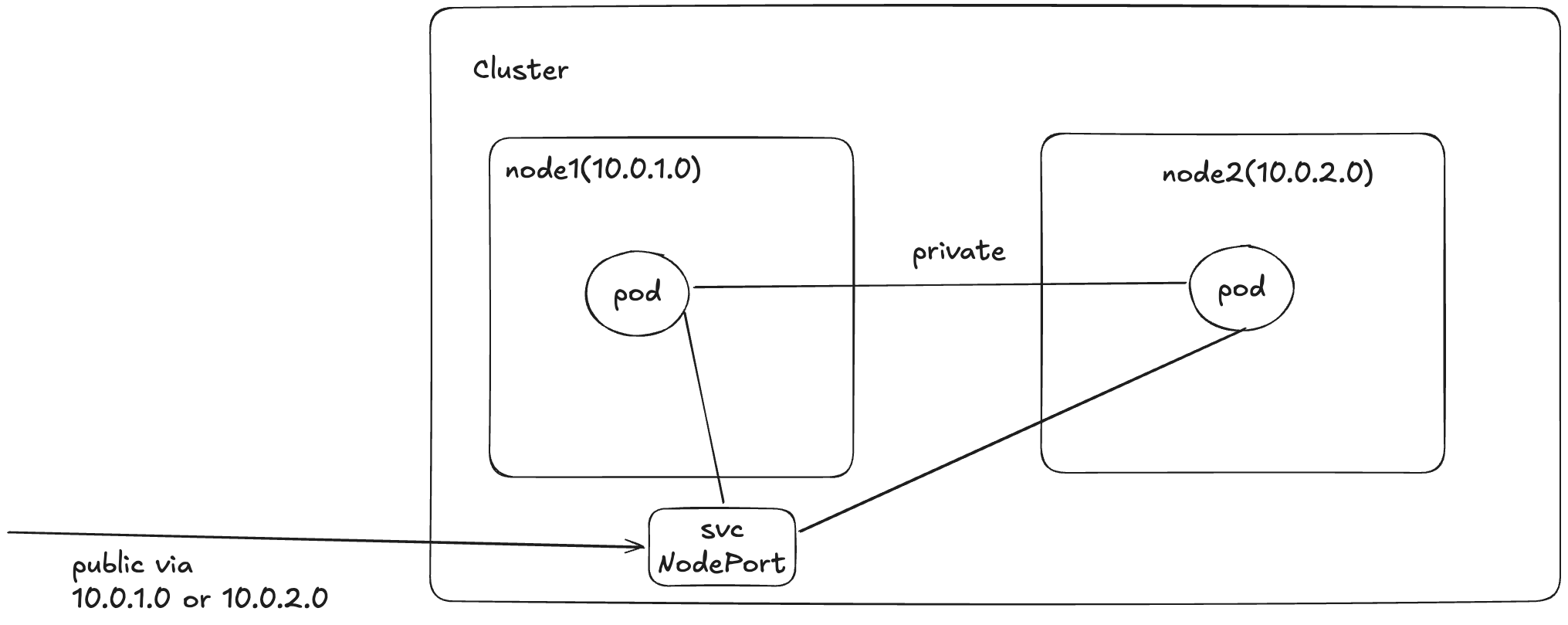

- NodePort: This exposes a specific port on

all the nodesinside the cluster, irrespective of whether that pod belongs to that service is there or not, for accessing your pod(application), u need to enter<NODE_IP:PORT>.

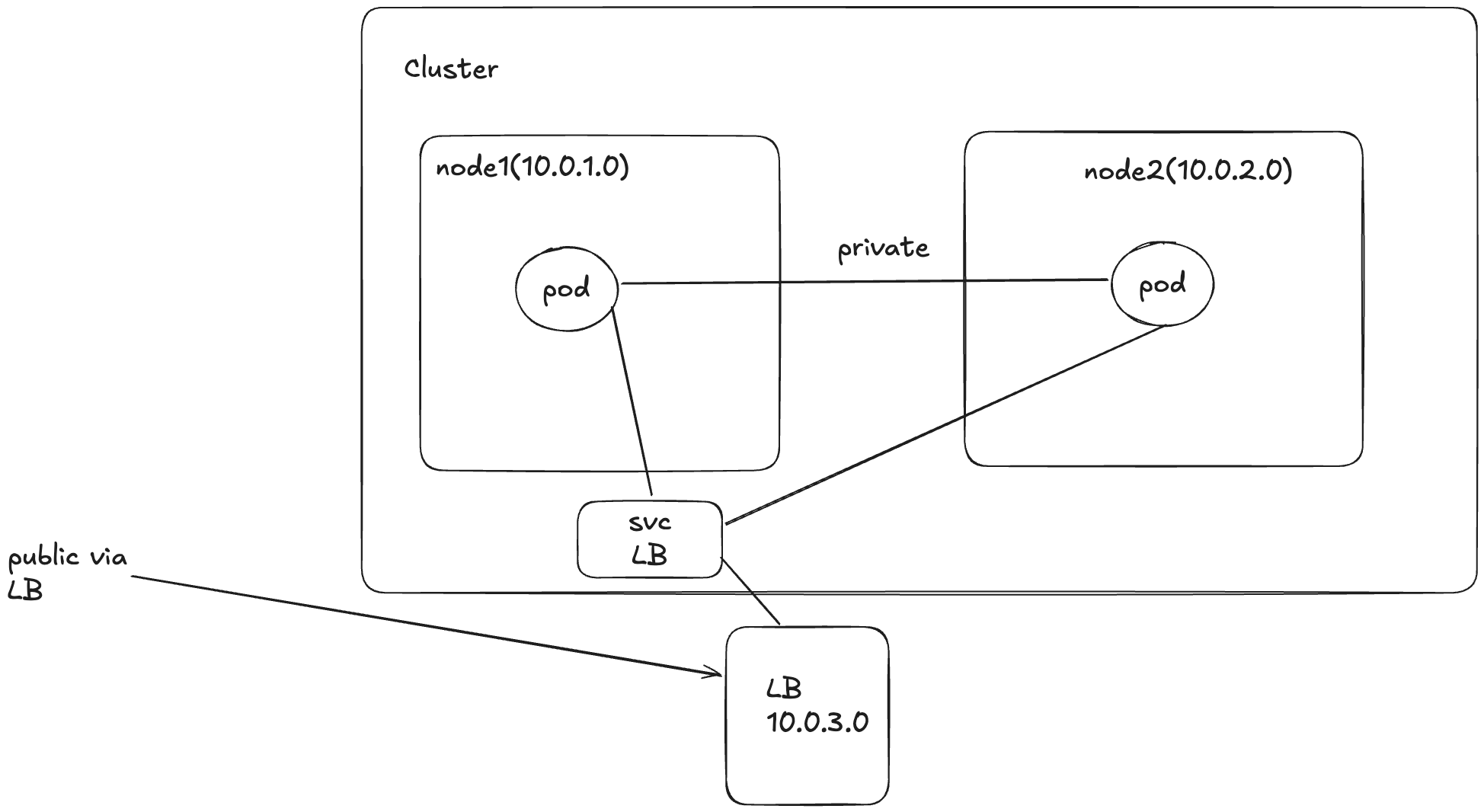

- Load Balancer: This creates a load balancer(probably from the hosted cloud services), and exposes the pods associated

to the service object across nodes using a single IP, for accessing u would enter

<LB_IP:PORT>.

- ClusterIP: This type is basically only internal, means all the services from different nodes inside the same cluster

can able to access each other, but

Now you may have a question, isn't the NodePort and LoadBalancer doing the same thing. yeah you are right mostly the same thing,

but the only difference is that, via NodePort you can able to access your service directly using the NodeIP, for example

if your pod has been deployed in multiple nodes, you need to know all the nodeIPs in order to access every single one or u need

to loadbalance it from the consumer end or a maintain it externally.

the LoadBalancer type exactly do this for us, it exposes a single ip(the lb server one) and then consumers access this IP,

internally lb server, loadbalances across nodes to our application(pod).

- Via Ingress